and “ dept_id” 30 from “ dept” dataset dropped from the results. Left a.k.a Leftouter join returns all rows from the left dataset regardless of match found on the right dataset when join expression doesn’t match, it assigns null for that record and drops records from right where match not found.ĮmpDF.join(deptDF,empDF.emp_dept_id = pt_id,"left")ĮmpDF.join(deptDF,empDF.emp_dept_id = pt_id,"leftouter")įrom our dataset, “ emp_dept_id” 5o doesn’t have a record on “ dept” dataset hence, this record contains null on “ dept” columns (dept_name & dept_id). |null |null |null |null |null |null |null |Sales |30 | Below is the result of the above Join expression. Outer a.k.a full, fullouter join returns all rows from both datasets, where join expression doesn’t match it returns null on respective record columns.ĮmpDF.join(deptDF,empDF.emp_dept_id = pt_id,"outer") \ĮmpDF.join(deptDF,empDF.emp_dept_id = pt_id,"full") \ĮmpDF.join(deptDF,empDF.emp_dept_id = pt_id,"fullouter") \įrom our “ emp” dataset’s “ emp_dept_id” with value 50 doesn’t have a record on “ dept” hence dept columns have null and “ dept_id” 30 doesn’t have a record in “ emp” hence you see null’s on emp columns. |emp_id|name |superior_emp_id|year_joined|emp_dept_id|gender|salary|dept_name|dept_id|

:max_bytes(150000):strip_icc()/Screen-Shot-2020-02-10-at-4.34.54-PM-d5835d4292124426b124872c3fc733f3.jpg)

When we apply Inner join on our datasets, It drops “ emp_dept_id” 50 from “ emp” and “ dept_id” 30 from “ dept” datasets. This joins two datasets on key columns, where keys don’t match the rows get dropped from both datasets ( emp & dept).ĮmpDF.join(deptDF,empDF.emp_dept_id = pt_id,"inner") \ Inner join is the default join in PySpark and it’s mostly used. |emp_id|name |superior_emp_id|year_joined|emp_dept_id|gender|salary|

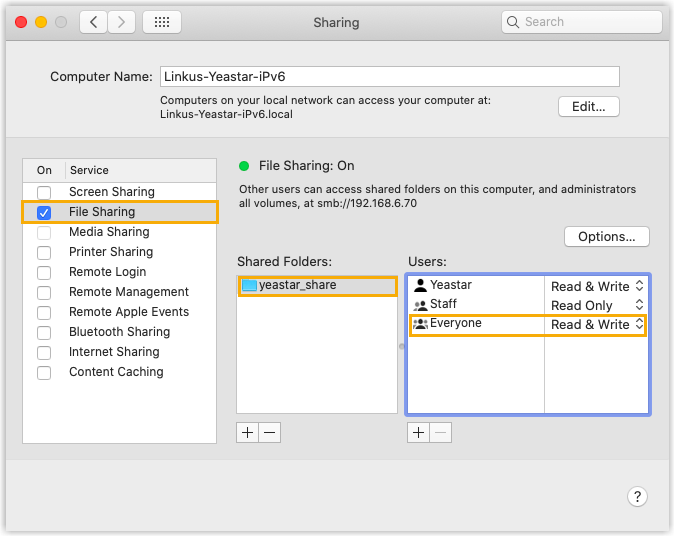

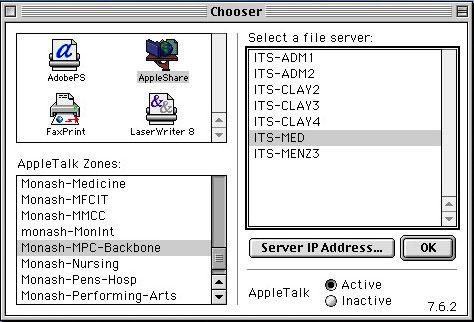

Pyspark to access smb share from mac how to#

Refer complete example below on how to create spark object. This prints “emp” and “dept” DataFrame to the console. here, column "emp_id" is unique on emp and "dept_id" is unique on the dept dataset’s and emp_dept_id from emp has a reference to dept_id on dept dataset.Įmp = [(1,"Smith",-1,"2018","10","M",3000), \ĮmpColumns = ["emp_id","name","superior_emp_id","year_joined", \ĮmpDF = spark.createDataFrame(data=emp, schema = empColumns)ĭeptDF = spark.createDataFrame(data=dept, schema = deptColumns) Join Stringīefore we jump into PySpark SQL Join examples, first, let’s create an "emp" and "dept" DataFrames. Below are the different Join Types PySpark supports.